That feels better - Cocoa, Hudson and running green

Continuous Integration

Continuous Integration (CI) has been around for a while now. Popularized in the java/ruby/[lang] communities, CI, when properly implemented promotes good code practices. CI alone won't guarantee great code, but it helps support good behavior and in fact rewards users routinely and reliably.

I've used Continuous Integration in many former lives - CI was essential on large geographically distributed teams - driving out incompatibilities in interface and implementation early and often.

My definition of a successful CI system and implementation are:

- Automated and unattended application build

- Automated and unattended test execution Everything beyond that is gravy (or sugar).

CI & the indie

When I released my first iPhone app, I was building the project in Xcode, switching to Finder and/or Terminal.app, compressing, copying and generally screwing up at every possible step. Although I've seen the benefits of automation multiple times, I was so busy getting this app out that I couldn't see how I could take the time to write scripts. That airlock of paradox didn't last long. I wrote a few scripts and every aspect of my build/sign/archive workflow was automated - when I ran the script.

I repeated this exercise for my first Mac product - this time a hodge-podge of scripts to build the app, generate the help files, generate the sparkle appcast, release notes, upload, etc. I still use this script and it works great - when I run the script.

Although I've had great success with CI in the past, I wasn't convinced that my one workunit indie shop could or would benefit from implementing CI. There were a few things that helped turn me around on this:

- The weakness of my script based build system continues to be the user centered part - me running the scripts. As I bounce from machine to machine, branch to branch, tucking frameworks away on one machine and not replicating them to another, [insert favorite 'in the heat of the battle' screwup here], issues might not emerge for some time.

- Increased desire to capture metrics and data about my personal development process. I'm not implementing heavy weight metrics, but I understand absolutely that data can empower me to make decisions - test data, build data, coverage data.

- Renewed belief that removing rote non value-adding activities from my routine will increase my effectiveness and throughput

Rule #1 of CI - Automated and Unattended Build

When something changes, your CI should build the system to ensure that nothing has broken. If you're in the zone and a failure pops up - easy to fix. If you find an issue weeks later - well we've all been there.

CI is all about automating those rote tasks. It is important to emphasize both the automated aspects as well as the unattended aspects of CI. The only thing worse than no CI is CI that is broken and neglected. We'll come back to this point in a bit.

Hudson CI & Cocoa

There are several compelling CI solutions in the market - CruiseControl, Hudson and scores of others in the opensource space. There are a spectrum of commercially available solutions as well including Bamboo. To my knowledge, there are no CI solutions that focus on the Cocoa space [just found BuildFactory - haven't checked it out]. The good news is that most of these systems can run external processes - the by-product is good news for cocoa devs.

Hudson seems to be the leading choice - it's really straight forward to get the basics working. From there, incremental tweaks should get you up and running.

Preparing for the move

I screwed up more than a few times getting my apps to build in Hudson. There are more than a few pages on the web that illustrate Cocoa/Hudson builds.

My suggestion is to ensure that you can take a fresh cut of your project from your SCM system, check it out to a new directory and build it clean.

I would encourage you to do this outside of your normal dev tree - it's surprising how easily a relative path will find its way into your xcode build settings.

- cd /tmp

- checkout project to foobaz

- build

- if errors, rm -rf /tmp/foobaz, fix errors in main tree, checkin, goto #1 This process should rid you of (many of) those hidden dependencies that will prevent a clean build once you're executing inside of Hudson.

Once you have a clean repeatable build - from your scm system - you should move on to getting hudson up and running.

Setting up Hudson

Installing Hudson is well documented on the net. The Hudson site includes installation instructions that work well. There are several examples of cocoa specific sites - I started here.

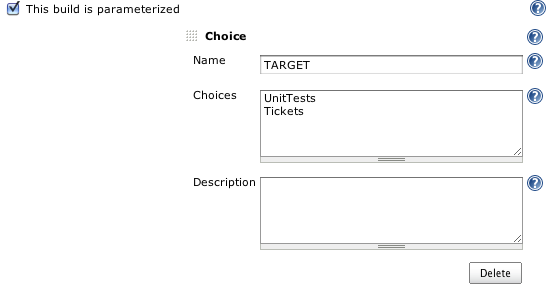

Because I have multiple targets setup in my Xcode project, I selected 'This build is parameterized' and added some targets to choose from. Hudson will remember your last choice.

Setting up SCM

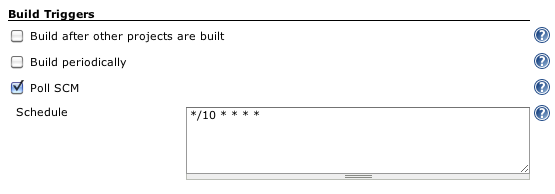

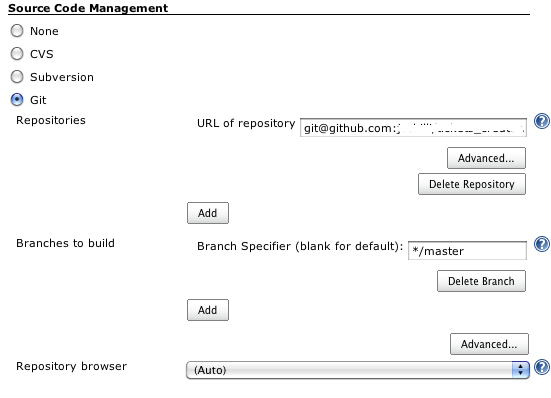

If you use Git, Christian Hedin's article covers that configuration as well. The critical thing is to use either SCM polling or a post-commit-hook to invoke the build. Hudson will allow you to setup a time based build e.g. build every thirty minutes. The issue with that is that it will execute the build whether there are changes or not. Polling or post- commit-hooks will ensure that builds are invoked when change occurs.

You will note that I've elected to only build my master branch - by default, Hudson will checkout and build each branch that it finds in your Git repo. While I see this as advantageous (forward dev on master, branches for production release and bug fix), my branches haven't gotten the Hudson CI/gcov/unit testing love that master has.

Setting up your project

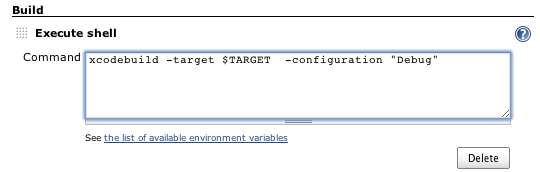

In the interest of walking before I run, I want my Hudson build to checkout my updated code, compile my code, execute unit tests and capture any reporting output for test coverage and unit test failures. It turns out that most of this is already performed when I build my UnitTests target in my Xcode projects.

Click on build now - you can check the console to see the steps that Hudson is taking.

If the stars are aligned, you should have a successful build. If not, you'll need to crawl through the console logs to determine where the failure occurred.

It is critical that you go back to your Xcode project/standalone build directory and correct mistakes there. Check in your changes and repeat. No one has to know how many times you repeat this cycle, but it's critical to meet the spirit and law of Rule #1!

Sugar

Once the basic build is working you should add unit test reporting. If you have or are planning to run unit tests (Rule #2), download this ruby script, install it in /usr/local/bin or the directory of your choice and change your build step to look like this:

In the Post-build actions, configure Hudson to publish your test results.

Trigger a build and you'll now see a chart with the build results. As your test suite grows, you should see a trending graph with increased numbers of tests.

Code Coverage

Unit tests execution is what we're after for Rule #2, but the number of tests as a key metric is easily misleading. I've seen a lot of cases where the same code is tested over and over again. Coverage is the key indicator!

Download and install gcovr and install it again in /usr/local/bin

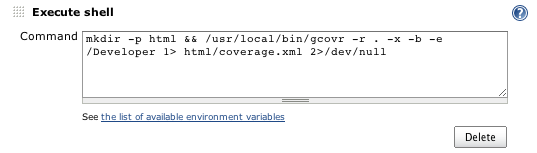

Add the following as a new build step (after the xcodebuild step)

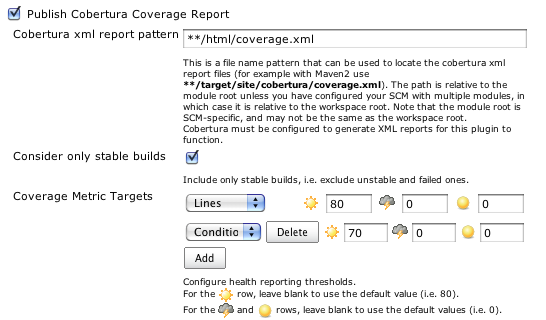

gcovr converts gcov data into a format parseable by Cobertura - a coverage analysis tool.

(See Tommy McLeod's blog post here for some additional details)

Assuming you have gcov correctly working for your project (the subject of an as yet unwritten post), executing the build will result in some nice graphs.

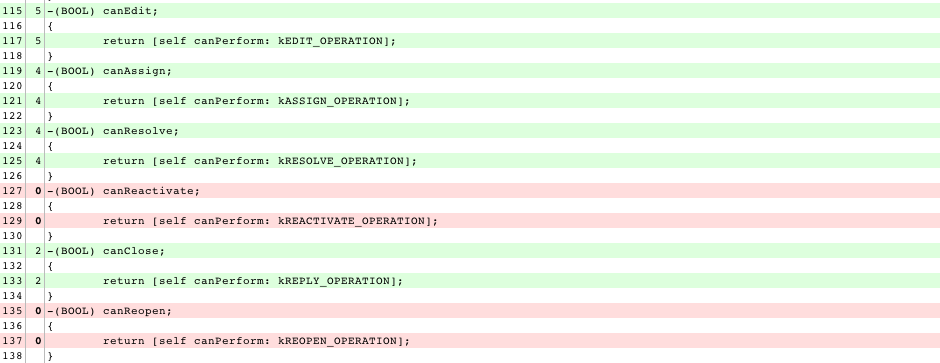

You can now navigate through the coverage reports and see your annotated source code including what's covered - and more importantly, what's not. (There's a one-line patch to gcovr detailed here that allows Cobertura/Hudson to navigate into your code)

[Edit 3/2/2010 - new example showing a real miss]

This example illustrates the value of visualizing test coverage - I had ~15 valid operations on a model class - I wrote this code from the spec - I erroneously interpreted running green on my unit tests meant all good. In fact, I had missed several cases - clearly identified here.

Finally

Make some changes in your project, commit them to your SCM system and monitor the build. Make a test fail, introduce a compiler error and monitor the results.

You want to be able to rely on your CI system to accurately report failures. If you have instability in the process, now is the time to grind through the issues.

You can install the Jabber notification plugin in Hudson, configure your jabber address (or that of a group chat if you're working with multiple people) and Hudson will now inform you of build successes and failures. You can also configure email.

The compelling aspect of the Jabber plugin is that Hudson has a jabber bot that you can use to get status, trigger builds and more.

What's left? There are a lot of different directions you can take Hudson now that the basics are in hand. I want to spend some more cycles getting better diagnostics when the build fails. Unit test failures are clearly reported. Compilation failures (forget to commit that new file to the build?) require spelunking through the console log. I also plan on moving my production builds to Hudson, but for now, getting that jabber notification that the build is clean is totally worth the time I've invested in setting this up.